mec vec alg ana sta

1 77 82 67 67 81

2 63 78 80 70 81

3 75 73 71 66 81

4 55 72 63 70 68

5 63 63 65 70 63

6 53 61 72 64 73Permutation and Bootstrap Procedures

PSTAT 234 (Fall 2025)

University of California, Santa Barbara

Bootstrap and Resampling

Test score data (Mardia, Kent and Bibby, 1979)

5 tests: mechanics, vectors, algebra, analysis, and statistics (\(n=88\))

First two tests were closed book, last three open book

Score data as an \(88 \times 5\) data matrix \(\mathbf{X}\), the \(i\) th row of \(\mathbf{X}\) \[ \mathbf{x}_i=\left(x_{i 1}, x_{i 2}, x_{i 3}, x_{i 4}, x_{i 5}\right). \]

First few lines of the data matrix are:

\(\overline{\mathbf{x}}= \frac{1}{88}\sum_{i=1}^{88} \mathbf{x}\) is the vector of column means, \[ \begin{aligned} \overline{\mathbf{x}} & =\left(\bar{x}_1, \bar{x}_2, \bar{x}_3, \bar{x}_4, \bar{x}_5\right) =(38.95,50.59,50.60,46.68,42.31) \end{aligned} \]

Covariance of tests \((j, k)\) is \(G_{jk} = \frac{1}{88} \sum_{i=1}^{n} (x_{ij} - \bar{x}_j)(x_{ik} - \bar{x}_k)\) and the covariance matrix \(\mathbf{G}\) is

\[ \mathbf{G}=\left(\begin{array}{ccccc} 302.3 & 125.8 & 100.4 & 105.1 & 116.1 \\ 125.8 & 170.9 & 84.2 & 93.6 & 97.9 \\ 100.4 & 84.2 & 111.6 & 110.8 & 120.5 \\ 105.1 & 93.6 & 110.8 & 217.9 & 153.8 \\ 116.1 & 97.9 & 120.5 & 153.8 & 294.4 \end{array}\right) \]

Eigenvalues and Eigenvectors of Covariance Matrix

- High correlations among test scores suggest underlying factors affecting student performance. PCA can help identify these factors.

- Covariance matrix \(\mathbf{G}\) has 5 eigenvalues and eigenvectors.

- Eigenvalues (\(\hat{\lambda}_i\)): amount of variance explained by each PC.

- Eigenvectors (\(\hat{\mathbf{v}}_i\)): direction of each PC.

| Eigenvalue (\(\hat{\lambda}_i\)) | Eigenvector (\(\hat{\mathbf{v}}_i\)) |

|---|---|

| 679.2 | (.505, .368, .346, .451, .535) |

| 199.8 | (-.749, -.207, .076, .301, .548) |

| 102.6 | (-.300, .416, .145, .597, -.600) |

| 83.7 | (.296, -.783, -.003, .518, -.176) |

| 31.8 | (.079, .189, -.924, .286, .151) |

Single Factor Model

- If only one factor \(\mathbf{v}\) affects all test scores,

- \(\mathbf{v}\) is a fixed and each student’s scores can be modeled as \[\mathbf{x}_i = Q_i \mathbf{v},\] where \(Q_i\) is a student-specific factor (e.g., “IQ”)

- Only (\(\hat{\lambda}_1\)) is positive; others are zero.

- First eigenvector (\(\hat{\mathbf{v}}_1\)) matches \(\mathbf{v}\).

- Variance explained by first PC: \(\hat{\theta} = \hat{\lambda}_1 / \sum_{i=1}^5 \hat{\lambda}_i=1\).

- All variance explained by one factor.

- In practice, we rarely see \(\hat{\theta} = 1\); real data is more complex.

Bootstrap Estimate of \(\hat{\theta}\)

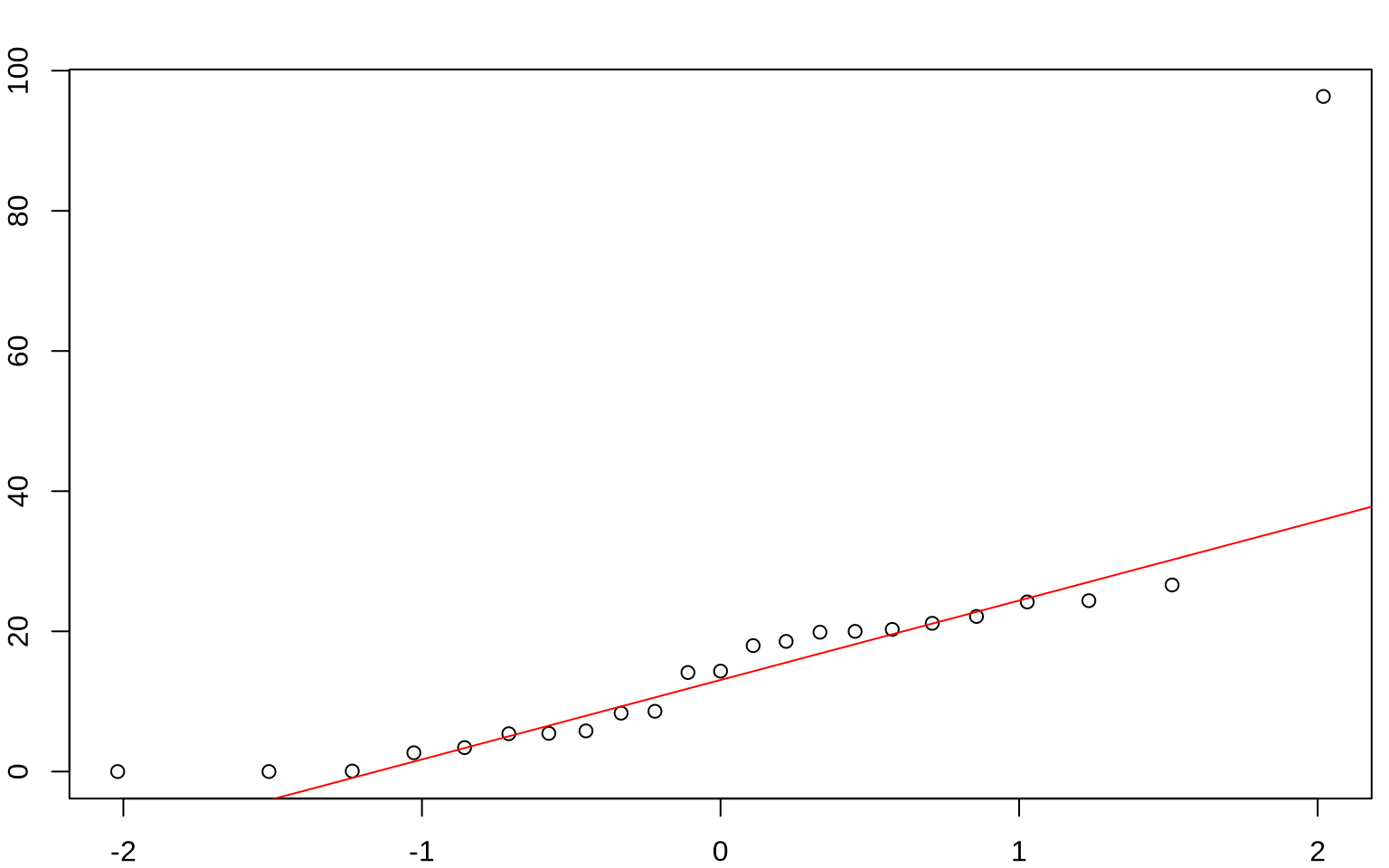

- For covariance matrix \(\mathbf{G}\), \(\hat{\lambda}_1 = 679.2\) and \(\hat{\theta} = 0.619\).

- How accurate is \(\hat{\theta} = 0.619\) as an estimate of \(\theta\)?

- We can use bootstrap to estimate standard error of \(\hat{\theta}\),

- as long as we can compute \(\hat{\theta}^*\) for bootstrap data set.

- Bootstrap data set is matrix \(\mathbf{X}^*\) of size \(88 \times 5\)

- Rows \(\mathbf{x}_i^*\) of \(\mathbf{X}^*\) are a random sample from the rows of data matrix \(\mathbf{X}\), \[ \mathbf{x}_1^*=\mathbf{x}_{i_1}, \mathbf{x}_2^*=\mathbf{x}_{i_2}, \cdots, \mathbf{x}_{88}^*=\mathbf{x}_{i_{88}}, \] where \(i_1, i_2, \cdots, i_{88}\) are IID random integers from 1 to 88.

Bootstrap Estimate of \(\hat{\theta}\)

- Compute the column means of \(\mathbf{X}^*\), \(\bar{x}_j^*=\frac{1}{88} \sum_{i=1}^{88} x_{i j}^* \quad j=1,2,3,4,5.\)

- Bootstrap covariance matrix \(\mathbf{G}^*\) is

\[ G_{j k}^*=\frac{1}{88} \sum_{i=1}^{88}\left(x_{i j}^*-\bar{x}_j^*\right)\left(x_{i k}^*-\bar{x}_k^*\right) \quad j, k=1,2,3,4,5 . \] - Compute the eigenvalues of \(\mathbf{G}^*\), namely \(\hat{\lambda}_1^*, \hat{\lambda}_2^*, \cdots, \hat{\lambda}_5^*\), \[ \hat{\theta}^*=\hat{\lambda}_1^* / \sum_{j=1}^5 \hat{\lambda}_j^* \]

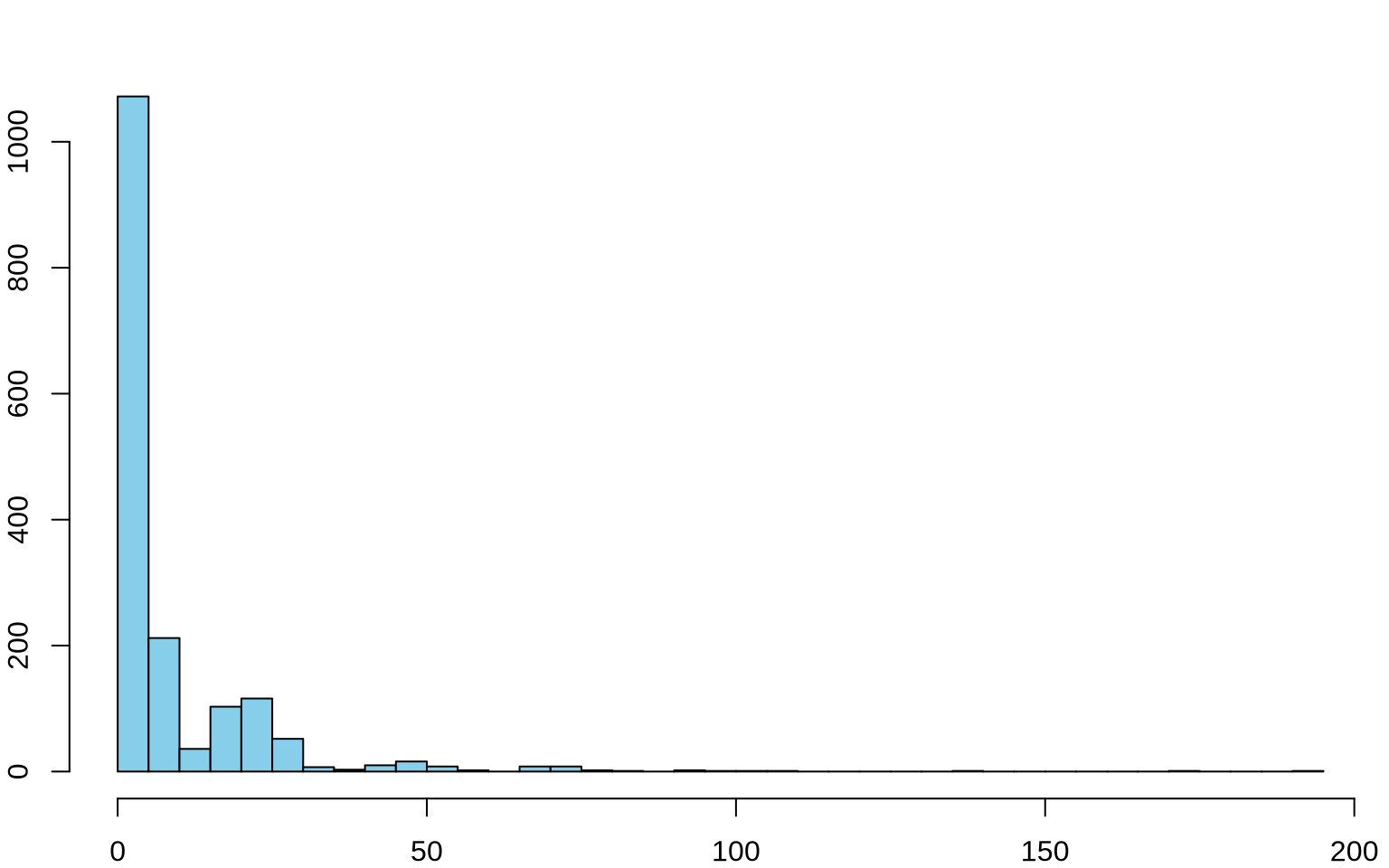

Bootstrap Estimate of \(\hat{\theta}\)

- Standard error of \(\hat{\theta}^*\) from \(B=200\) bootstrap replications is \(\hat{\mathrm{se}}_{200}=.047\).

- Mean of replications (.625) is close to original (.619), indicating low bias.

- Histogram appears roughly normal

- Standard confidence interval for \(\theta\) uses \(\hat{\theta}\) and bootstrap standard error.

| Interval |

|---|

| \(\hat{\theta} \pm z^{(1-0.05)} \hat{\mathrm{se}} = [.542,\ .696]\) |

| \([\hat{\theta}^*_{(0.05)}, \hat{\theta}^*_{(0.95)}] = [.545,\ .693]\) |

First principal component: \(\hat{\mathbf{v}}_1=(.51, .37, .35, .45, .54)\)

- Assigns positive, similar weights to all test scores.

- Represents each student’s overall or average performance.

Second principal component: \(\hat{\mathbf{v}}_2=(-.75,-.21, .08, .30, .55)\)

- Closed-book tests have negative and open-book have positive weights

- Measures contrast between open-book and closed-book performance.

- High \(z\) score indicates much better performance on open-book tests compared to closed-book tests.

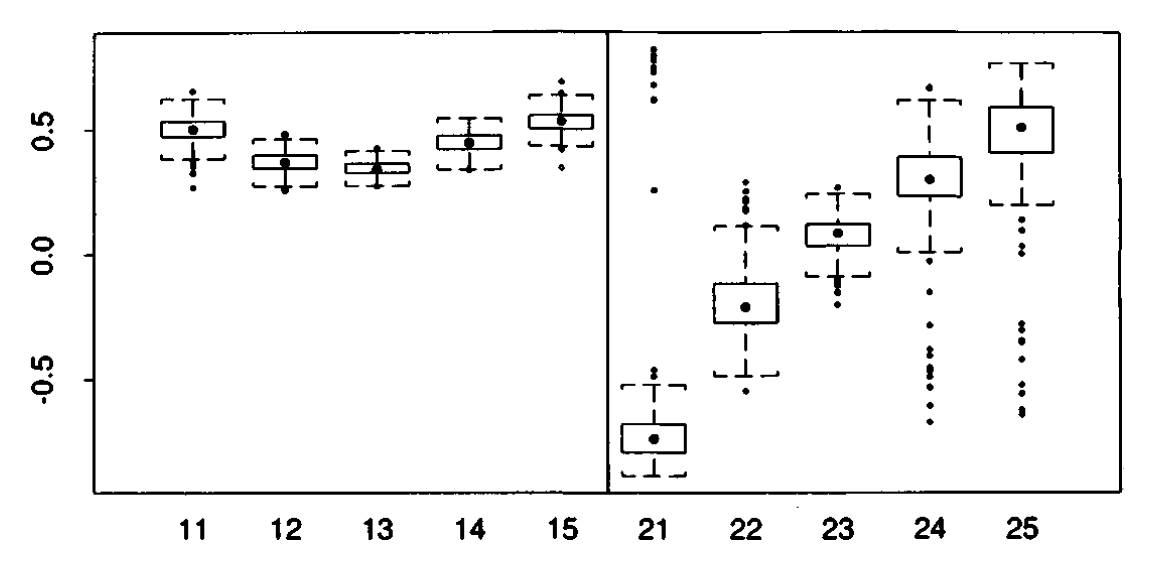

Bootstrap Estimates of Principal Components

Bootstrap Estimates of Principal Components, \(\hat{\mathbf{v}}_1\) (left panel) and \(\hat{\mathbf{v}}_2\) (right panel) shows empirical distribution of the 200 bootstrap replications \(\hat{v}_{i k}^*\).

Comparing Bootstrap Standard Errors

- \(\widehat{\operatorname{se}}_{200}\) bootstrap standard error with \(B=200\) bootstrap replications

- \(\widetilde{\operatorname{se}}_{B, \alpha}\) with \(B=200\) and \(\alpha\)-cutoff: e.g., \(\widetilde{s e}_{200,.90}\) and \(\widetilde{s e}_{200,.95}\).

| First PC: \(\hat{\mathbf{v}}_1\) | \(\hat{v}_{11}\) | \(\hat{v}_{12}\) | \(\hat{v}_{13}\) | \(\hat{v}_{14}\) | \(\hat{v}_{15}\) |

|---|---|---|---|---|---|

| \(\widehat{\operatorname{se}}_{200}\) | .057 | .045 | .029 | .041 | .049 |

| \(\widetilde{\operatorname{se}}_{200, .84}\) | .055 | .041 | .028 | .041 | .047 |

| \(\widetilde{\operatorname{se}}_{200, .90}\) | .055 | .041 | .027 | .042 | .046 |

| \(\widetilde{\operatorname{se}}_{200, .95}\) | .054 | .048 | .029 | .040 | .047 |

| Second PC: \(\hat{\mathbf{v}}_2\) | \(\hat{v}_{21}\) | \(\hat{v}_{22}\) | \(\hat{v}_{23}\) | \(\hat{v}_{24}\) | \(\hat{v}_{25}\) |

|---|---|---|---|---|---|

| \(\widehat{\operatorname{se}}_{200}\) | .189 | .138 | .066 | .129 | .150 |

| \(\widetilde{\operatorname{se}}_{200, .84}\) | .078 | .122 | .064 | .110 | .114 |

| \(\widetilde{\operatorname{se}}_{200, .90}\) | .084 | .129 | .067 | .111 | .125 |

| \(\widetilde{\operatorname{se}}_{200, .95}\) | .080 | .130 | .066 | .114 | .120 |

Bootstrap and Monte Carlo Simulation

- Bootstrap is a Monte Carlo method where we simulate data by resampling from observed data.

- Monte Carlo methods use random sampling to approximate complex mathematical or statistical problems: e.g., card games

- Monte Carlo methods are widely used in various fields, including finance, physics, and machine learning.

- Block Bootstrap: for time series or spatial data, resample blocks of consecutive observations to preserve dependence structure.

- Parametric Bootstrap: assume a parametric model for the data, estimate parameters, and generate bootstrap samples from the fitted model.

- Bayesian Bootstrap: use Bayesian methods to generate bootstrap samples, incorporating prior information about the data.

Two-sample Permutation Test

Comparison of two samples are commonly carried out by hypothesis testing: e.g., two sample t-test.

Under the null hypothesis, the two samples are exchangeable.

Construct the null distribution of the test statistic by first randomly permuting the labels of the two samples.

Two-Sample Permutation Test

- Pool the \(m+n\) values

- repeat 9999 times

- Draw a resample of size \(m\) without replacement.

- Use the remaining \(n\) observations for the other sample.

- Calculate the difference in means, or another statistic that compares samples.

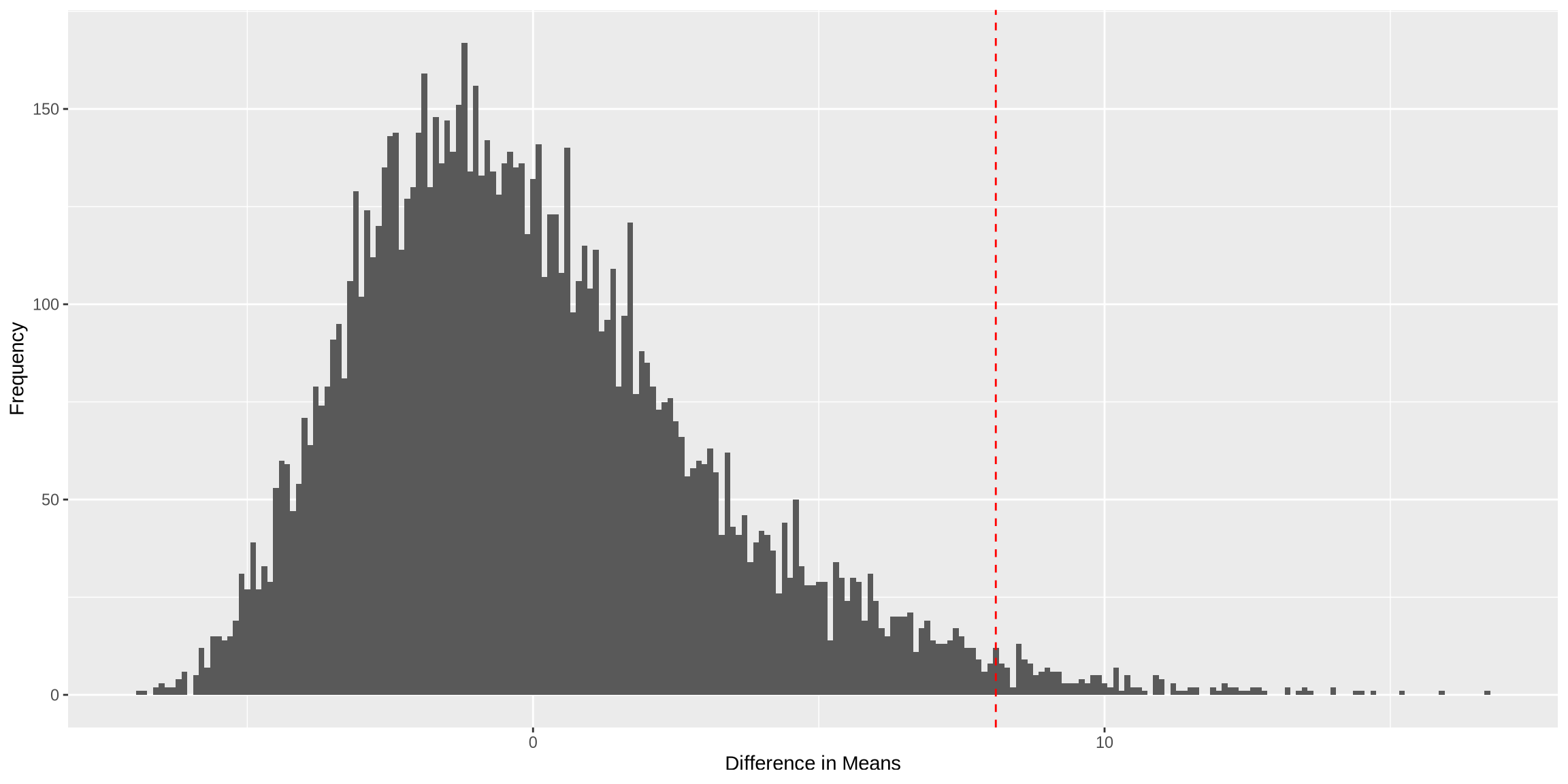

- Plot a histogram of the random statistic values; show the observed statistic.

- Calculate the p-value as the fraction of times the random statistics exceed or equal the observed statistic (add 1 to numerator and denominator); multiply by 2 for a two-sided test.

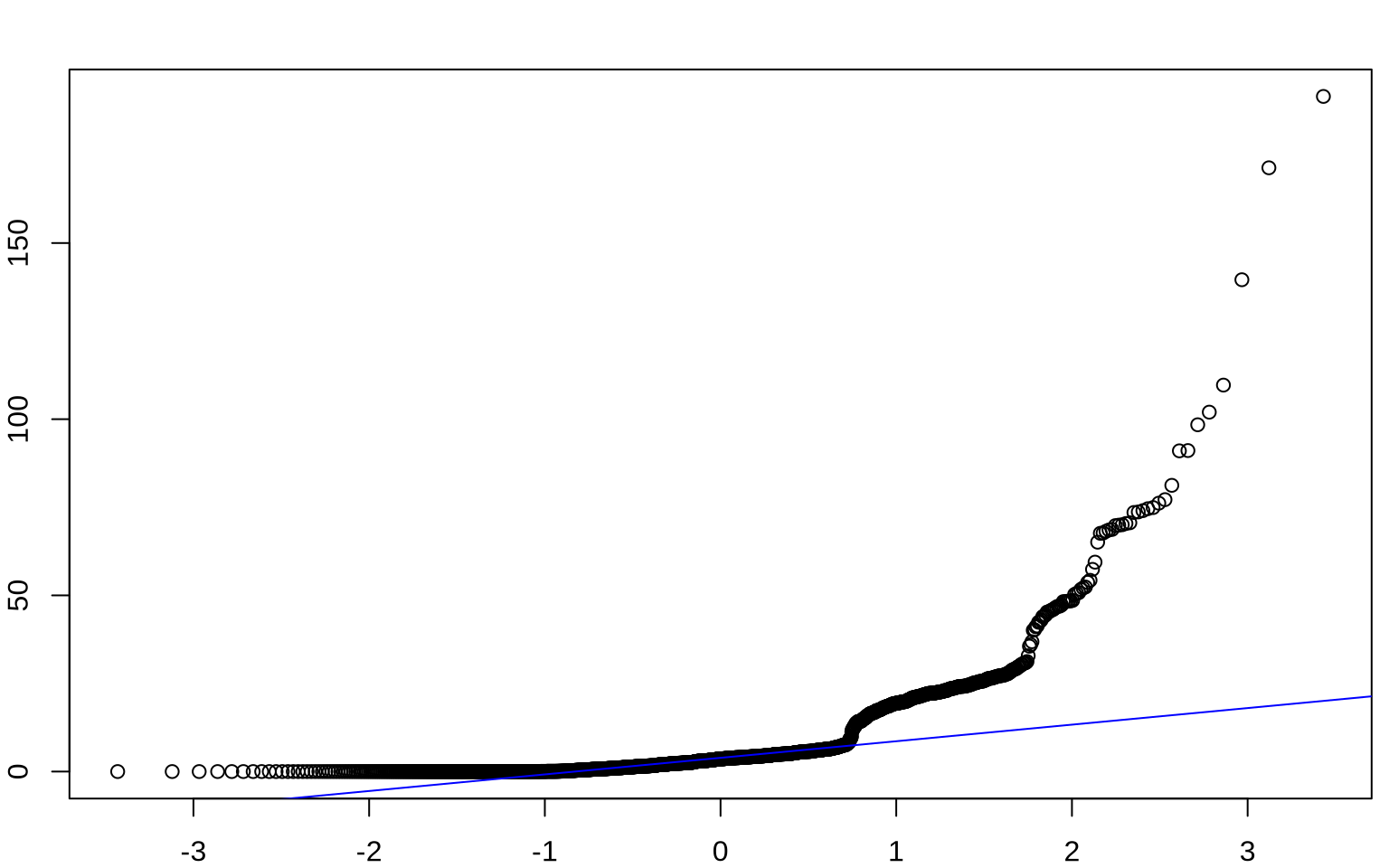

Verizon Data

Verizon was an Incumbent Local Exchange Carrier (ILEC)

- Responsible for maintaining land-line phone service in their area

- Also sold long-distance service

Competitors were termed Competitive Local Exchange Carriers (CLEC)

When something went wrong, Verizon was responsible for all infrastructure repairs

The New York Public Utilities Commission (PUC):

- Monitored fairness by comparing repair times: ILEC vs. CLEC

- Compared repair times for Verizon and different CLECs

- Similar business structure for energy companies, etc.

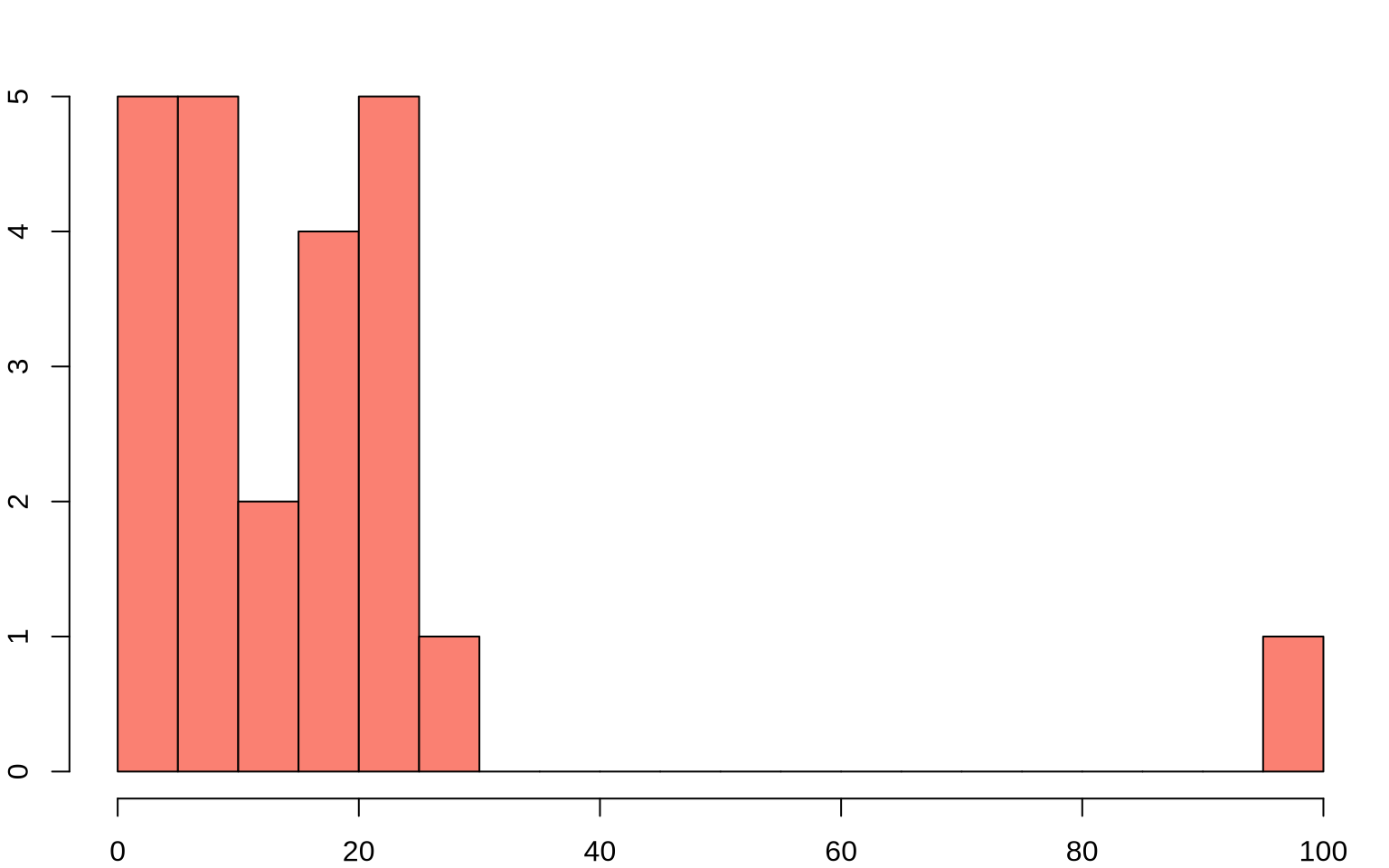

Verizon Data

T-Test: Verizon Example

Code

Welch Two Sample t-test

data: CLEC and ILEC

t = 1.9834, df = 22.346, p-value = 0.02987

alternative hypothesis: true difference in means is greater than 0

95 percent confidence interval:

1.091721 Inf

sample estimates:

mean of x mean of y

16.509130 8.411611 Permutation Test: Verizon Example

Code

# Calculate the observed difference in means

observed_diff <- mean(CLEC) - mean(ILEC)

set.seed(123)

# Function to perform permutation test

permutation_test <- function(data1, data2, num_permutations = 10000) {

combined <- c(data1, data2)

perm_diffs <- numeric(num_permutations)

for (i in 1:num_permutations) {

permuted <- sample(combined)

perm_clec <- permuted[1:length(data1)]

perm_ilec <- permuted[(length(data1) + 1):length(combined)]

perm_diffs[i] <- mean(perm_clec) - mean(perm_ilec)

}

return(perm_diffs)

}

# Perform the permutation test

perm_diffs <- permutation_test(CLEC, ILEC)

# Calculate the p-value

p_value <- mean(abs(perm_diffs) >= abs(observed_diff))

# Plot the permutation distribution

perm_diffs_df <- data.frame(perm_diffs = perm_diffs)

ggplot(perm_diffs_df, aes(x = perm_diffs)) +

geom_histogram(binwidth = 0.1) +

geom_vline(aes(xintercept = observed_diff), color = "red", linetype = "dashed") +

labs(x = "Difference in Means", y = "Frequency")

| Test | p-value |

|---|---|

| t-test | 0.0299 |

| Permutation | 0.0183 |